Your digital transformation agency.

Creating the perfect blend of innovative technology solutions that drive growth, access to new channels and 360° customer engagement.

Insight.

Innovation.

Impact.

With 20 years experience in the business, our team of experts understand that one size doesn’t fit. We work collaboratively to deliver proven solutions that combine data-driven insights, strategic planning and innovative technology to transform your business and deliver exceptional results.

The latest projects.

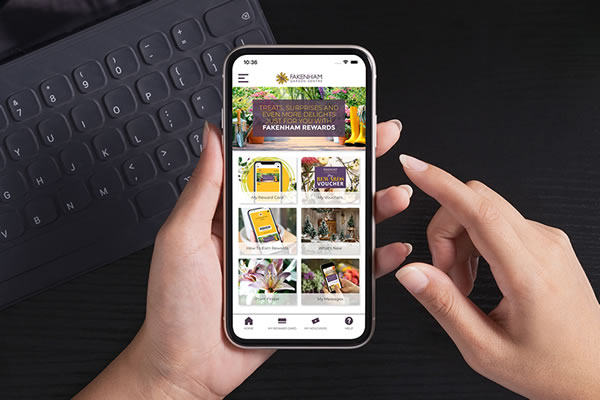

Loyalty App - Fakenham Garden Centre

Our strategy, that leverages loyalty and app-based personalisation, is helping this forward-thinking retailer increase customer engagement, footfall and reduce its costs of marketing too.

Ringways Motor Group

Following significant research and development we are delighted to release our global ecommerce platform to dealerships and manufacturers alike. It addresses all of the many issues of dealership frustrations with powerful “out of the box” ecommerce and CRM functionality that is simple to integrate and enables them to sell better and go head-to-head with the likes of Cinch, Carzoo and others.

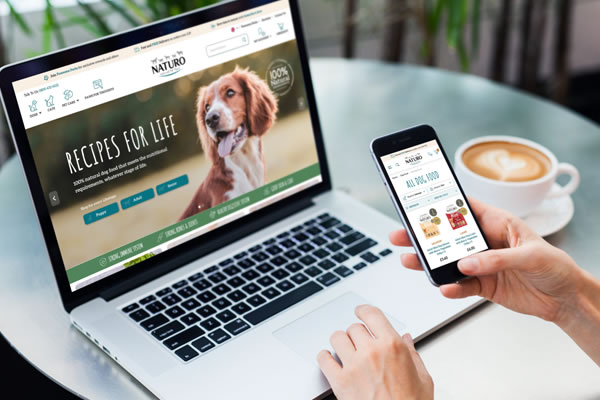

Naturo Pets

We’re proud to have developed for the UK’s largest independent pet food retailor a powerful, ecommerce international site that reflects their branding values. Through considered UX design, the new website has delivered improved conversion performance.

Planet X

As a true marketing service business, we have worked not just to deliver an improved purchase experience online but supported the business by providing interim Head of E-Commerce & Marketing support as part of a strategy of business transformation.

Dedicated to delivering excellence to our clients.

Over 20 years of multichannel & eCommerce experience

Proven credentials in business growth

Cost effective solutions utilising existing technology